How Does This 3D Stuff Work, Anyway?

Part 3, Animating and Rendering

This is the third part of a series of articles about creating 3D graphics. We’ve talked about how 3D software can let you describe the geometric shape of objects in a scene, in a process referred to as Modeling, then we talked about describing the color and appearance of those objects, using a Properties or Material Editor. Now we’re finally ready to talk about how one animates those objects, and then how they become rendered pictures.

Animation

Often when someone first sees computer animation they ask something like, “I don’t get it. Do you have draw all of these pictures yourself?

The answer, of course, is no, thank goodness, because I have better things to do than determine how moving colored objects will look like 30 times each second (for video, or 24 frames per second for film). This is the main benefit one gets from using computers in such a process. In fact, computers would love nothing better than to figure out that stuff for you. Let’s put it this way; computers have no life.

To animate these objects, all you have to tell the computer is what position, rotation, and what distortions are being applied to the objects, lights, and cameras in a scene at key critical times during the animation, often referred to as keyframes.

If an object is moving from the center of the screen at frame, and ends up at the upper right-hand corner at frame 90 (at 30 frames per second, or 30 FPS, this would be a three second move), and was turned a quarter turn away from you, those two keyframes might look like this:

Frame 0:

Position (0,0,0)

Rotation (0,0,0)

Frame 90:

Position (300,100,0)

Rotation (0,45,0)

As you might be able to figure out, the position is a three-part number which describes the object’s position using X (right to left in width), Y (up and down in height) and Z (in and out in depth) coordinates in a kind of 3-dimensional grid. The rotation describes the objects orientation in space, perhaps in degrees. Now this example is somewhat simplified (as is my explanation...) but you could see how the computer could use this information to determine where the object might be halfway between frames 1 and 90 (say, frame 45). If it was moving at a constant speed (neither accelerating or decelerating) it would be at:

Frame 0:

Position (150,50,0)

Rotation (0,22.5,0)

Hey, how about that? And I’m not even a computer. This is essentially what an animation program does for you. It takes the information you supply it at certain keyframes and interpolates all of the in-between frames for you. All you have to do is say, “It starts here, then goes here, and turns this way, and then it holds for 30 frames, then it flies here and rotates for 60 frames, and then it blows into a zillion pieces.”

Of course all of those specifics like the ability to blow things into a zillion pieces, or even the ability to understand a made-up number like zillion must be written into your program, so your milage may vary in this case.

Anything in a scene may be animated; the object’s position, scale, rotation and visibility, a virtual camera with a virtual, changeable lens, the lights and their shape and intensity, as well as anything in the scene which can be described numerically (which is to say everything).

Rendering

After describing the position of the elements of a scene at those keyframes, and after testing the motion that the computer interpolates from that information (adding ease-ins, smoothing unnatural movement, etc.) the time finally comes to render your scene.

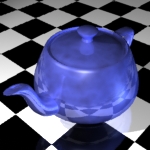

In brief, what the computer does at this point is to pull together all of that information you’ve been providing it up to this point; the shape of the objects, their color and attributes, the way they are lit, and viewed, the way that they move, and their position, and uses all that to produce an image of that discrete moment in time.

To look deeper at the process (and I’m way over my head here...) the renderer asks basically one question: “What is the color of this pixel here.” It uses everything it knows about the scene to determine this for each pixel in the scene. If you were to listen in to this process, it might sound something like:

What object is the front-most object at this pixel’s position from the viewpoint of the camera in the scene? What color is that object after taking the object’s color, texture, shape, and orientation into consideration? What color is that after adding the effect of lights and subtracting shadows? Is the object solid or transparent? Can you see anything through the object? What’s behind it? What color is the object behind it? ...

And so on, until it’s determined the colors of all of the pixels in that frame. This might take a while for a 720 X 486 pixel image (D1 NTSC video) since it has to determine the color of 349920 pixels! Now it has to do that thirty times to describe one second of movement. Make that 60 times if you’re rendering in fields. Then it has to do that for the length of the animation. Whew!

If you get the idea that this is a long, complicated process, you’d be right. Please bear in mind that this whole explanation has been a gross oversimplification of the whole process in an attempt to make it understandable. I hope, however, that it has been of some use to you.